The drive may or may not support the Write-Read-Verify feature set.

Actual-broken cells is another story, same as a bit of grit landing on a drive-platter. Worn-out SSD cells tend to go read-only, so the data is recoverable. In parity or mirrored RAID, you theoretically have a good copy elsewhere which makes recovery easy.Īn SSD cell that fails to program will return a similar fail-state, and a fresh block will be pulled out of the reallocation pool. Some of the higher-end RAID arrays (and of course ZFS) have background scanning routines to read storage during idle times specifically to locate these errors. It can also be detected on read, with the failure case you've already identified. But the failure can be detected on write, and the sector will be pulled out of the reallocation pool.

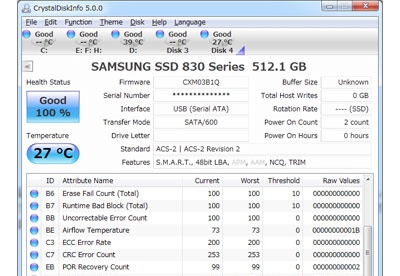

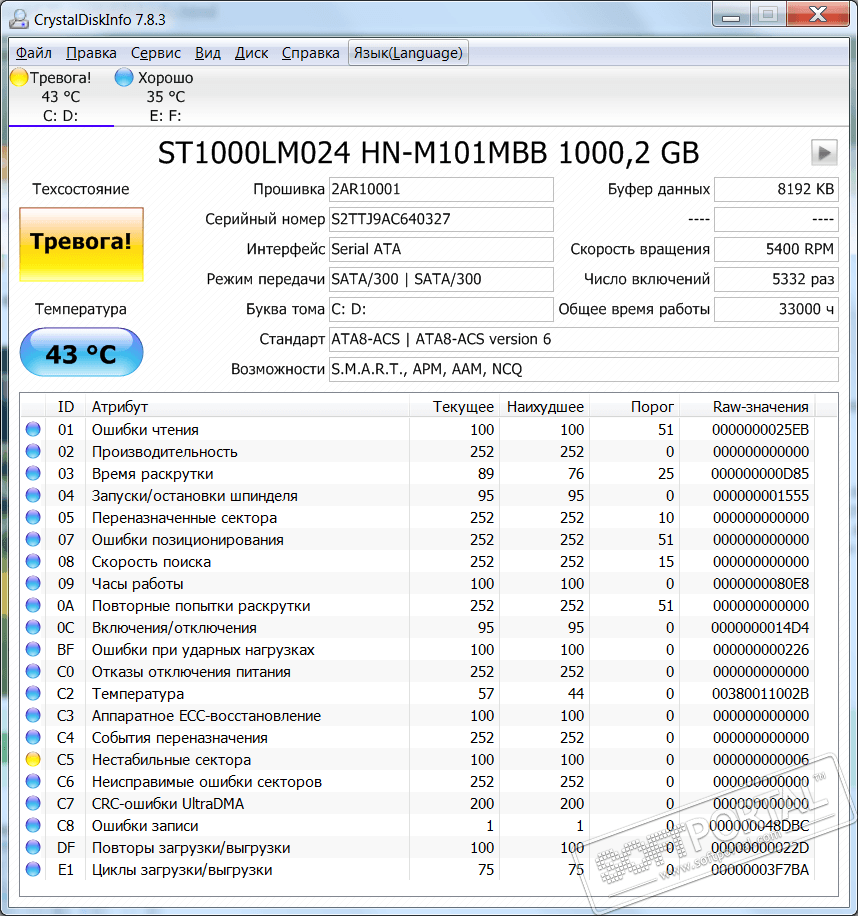

I can't explain the exact mechanism, and I'm fairly sure the exact way has shifted a bit as we've moved through the various magnetic technologies. Back in the olden days of loud drives, you could audibly tell when a drive was grinding on a bad block from the sound of it. I'm so baffled.ĭrives to perform a form of write-verification, and have been doing so for quite some time. SSD disk should face the same situation, then what about SSD? SSD's case seems easier to understand, because I can easily imagine that SSD can do a quick read verification after write, very quickly however, is such scheme practical for a spindle hard drive? It seems that the HHD head can do either read or write at one given time, so in order to verify the write, it has to wait for another spinning round until the written sector is positioned again under the head - which would drastically slow down the data throughput. Which behavior is the truth? Is there any web articles clarifying this? If my idea is not the fact, then the HDD can only verify the written sector the next time user fetches it but in case the next fetch fails CRC and ECC, and its corresponding cache has been discarded, we can do nothing but to know the previously written data has been permanently lost. The drive can try as many "hot spare" sectors as he will until he finds a good one. This is a nice moment to do sector replacement because the to-be-written data now surely remains in hard drive's internal cache. In case the verification fails or the sector is deemed unreliable by the firmware, the firmware can immediately replace the sector so no user data is lost(at least not lost at the moment it is written to disk surface). When HDD writes a sector, it does read verification immediately(verify CRC checksum and employ ECC to recover the soft error bits). Now my question is: When does the HDD firmware determine a sector should be replaced. Users can know the development of sector replacement count from S.M.A.R.T item called "reallocated sector count".Ī sample HDD drive with too many reallocated sectors(the index 133 drops below threshold 140): This mechanism eliminates the need for OS to do bad sector managment itself. As we all know, modern hard disk drives do internal bad sector management inside their firmware, that is, when the drive detects a physically damanged or unreliable physical sector, it replaces the bad one with a good one from the reserved sector store.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed